Plagiarism is anything but pleasant. No one likes to find their own content on another website without credit. And not only because it leads to duplicate content, which means that two sites are competing with each other and it might look suspicious to both search engines and users, but also because stealing content is not ethical at all, it’s forbidden and it shouldn’t happen.

Plagiarism is basically copying text from one site to another, without any citation to the original source. Miner in Marketing Miner considers plagiarism as two pages that match a significant part of the content.

When to use Plagiarism Checker

You can use this miner if you suspect that your content has been copied elsewhere without your knowledge, and you want to find those sites.

Also, if you create a lot of content on the web and you know that there is a significant chance that it will be copied to another site, it makes sense to check for plagiarism on a regular basis.

How to check for Plagiarized Content

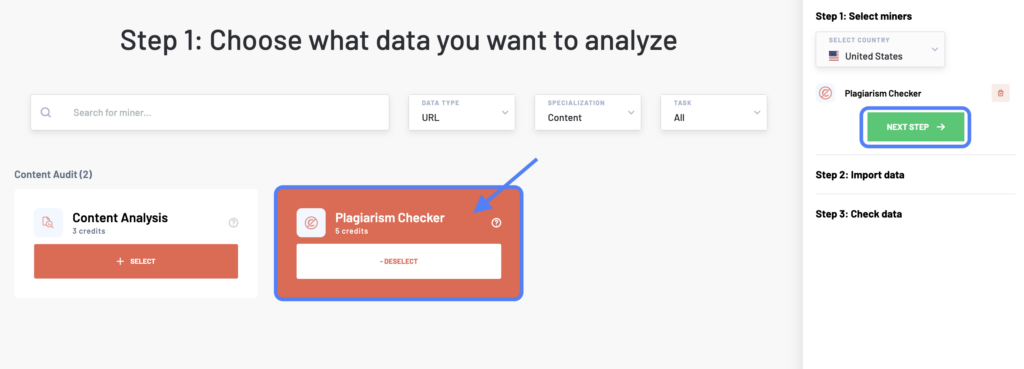

After logging into your Marketing Miner account, click the Create Report button at the top right corner. Then, select the Plagiarism Checker and your target country.

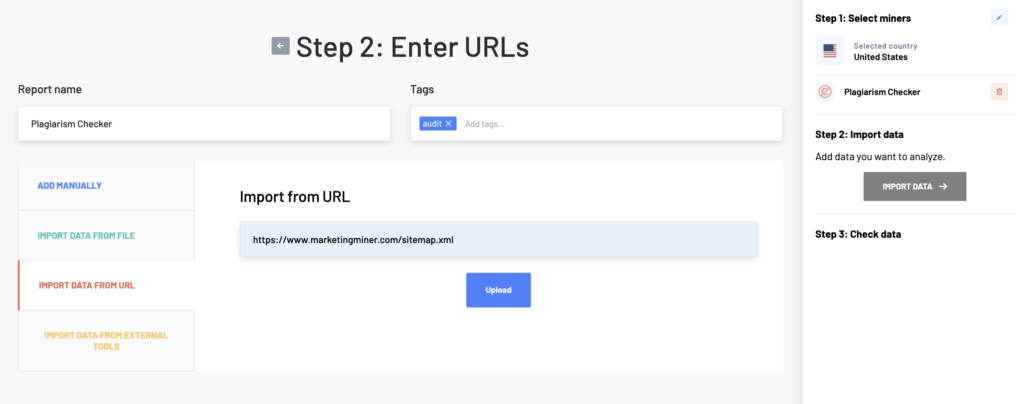

In the next step, enter a list of all the web pages you want to analyze. There are more ways how to do it. You can either copy-paste different URLs (one per row), upload a data file, add a sitemap, or import data from external tools (Google Analytics, Search Console, or Google Sheets).

You can easily use this tool to analyze all the URLs stored in your sitemap too. Just select the Import Data from URL option and enter your sitemap URL to upload it. Then, click on Import Data to check plagiarized content for your list of URLs.

Once the report is generated, you will be emailed the analyzed data.

Plagiarism Checker report example

Report columns

- Input: Analyzed URL.

- Plagiarism URL: URL where is part of your text copied.

- Duplicate preview: Link to report in Marketing Miner. After clicking on this link, you will see duplicate content that has been detected on both URLs.

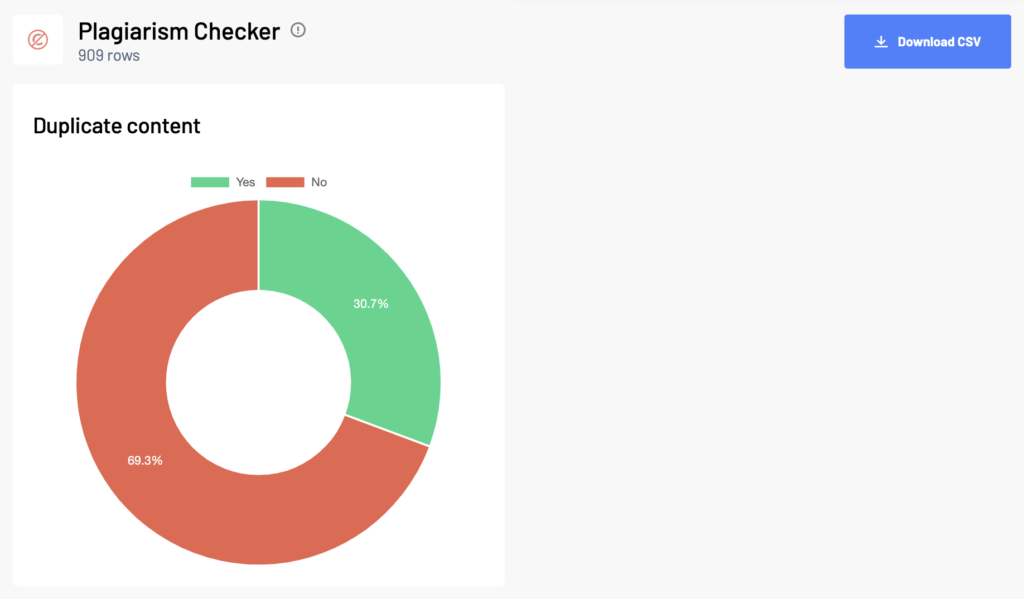

Report data

First check the whole report. This tool may evaluate duplicate content within your site that is very similar or recycled. If this is the case, you can either edit the content on the page or use the canonical tag to explain to the search engine that this is duplicate content and tell them which URL to prefer.

If you find copied content from your site on another site and you are convinced that they have stolen it from you, you should contact them. You can ask them to either remove the text from the page entirely or to give you credit by placing a link to your original content.